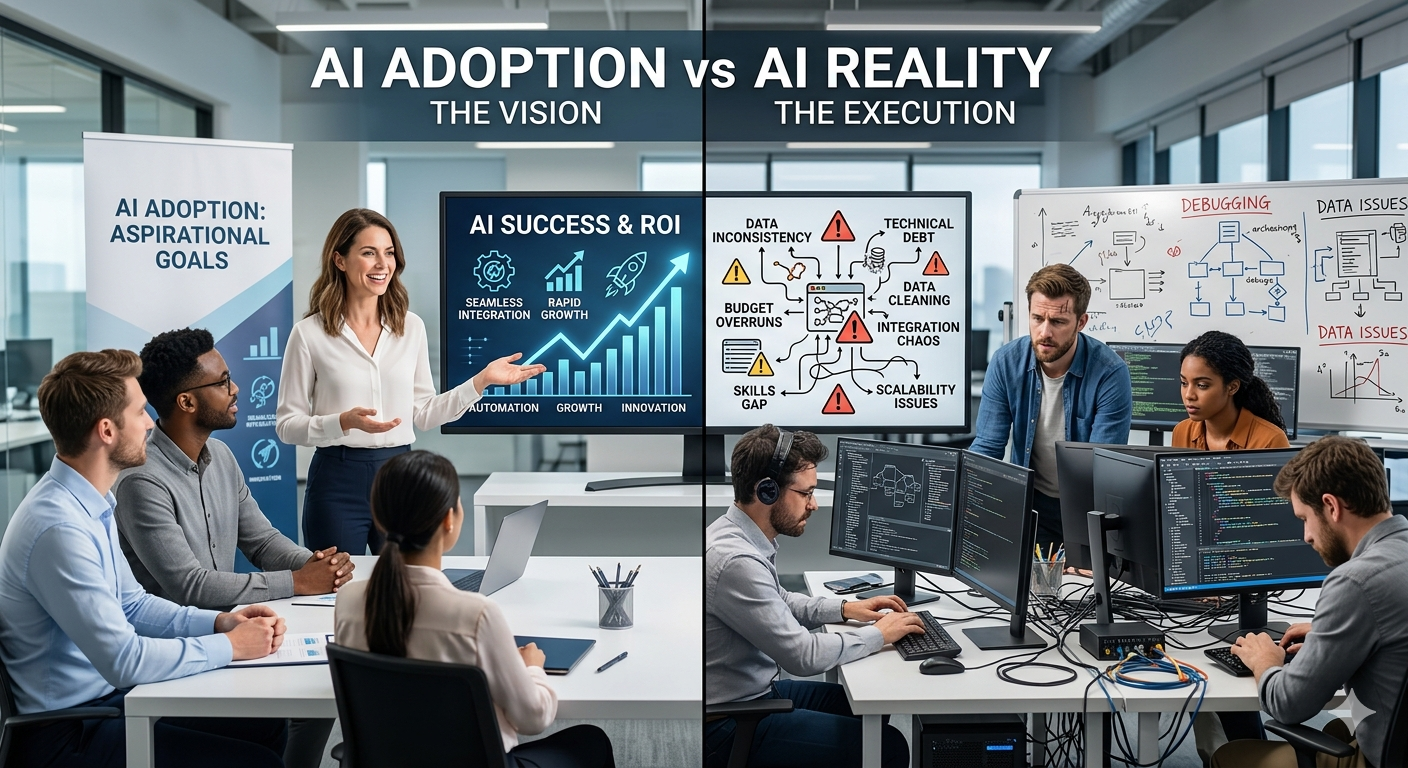

There is a version of the AI story that goes like this: companies saw ChatGPT, realized the future had arrived, moved fast, deployed AI everywhere, and transformed their businesses. That version makes for good investor decks. The reality is messier, more expensive, and far more interesting.

What actually happened is that almost every company launched AI initiatives simultaneously, many of them with very little understanding of what production AI systems actually cost, how they behave at scale, and what it takes to keep them reliable. The gap between the AI demo and the AI production system turned out to be enormous. And most enterprises are still in the process of figuring out how to close that gap.

This piece is not about whether AI is real or valuable. It is. This is about understanding what building serious AI systems inside a company actually requires, what it costs, what breaks, and what you should be thinking about if you are an engineer, architect, or technology leader trying to navigate this space honestly.

The Rush That Started Everything

When OpenAI released ChatGPT in late 2022, something unusual happened in the enterprise technology world. Usually, new platforms take years to reach boardroom-level urgency. Cloud computing took most of the 2000s to become a board-level concern. Mobile took years after the iPhone before enterprise IT leaders were forced to take it seriously. AI did not have that grace period.

Read on →