I remember the day our shiny new AI code reviewer went live like it was yesterday. It was a Tuesday in early 2025, and our team at EchoSoft—a mid-sized dev shop cranking out enterprise apps—had just pushed the button on integrating GPT-4o into our GitHub Actions pipeline. We’d spent weeks fine-tuning prompts, benchmarking against human reviewers, and celebrating how it slashed review times from hours to minutes. “This is it,” I told the devs over Slack. “No more blocking PRs on nitpicks.” We high-fived virtually, popped a bottle of virtual champagne, and watched the first few PRs sail through with glowing approvals.

Then came PR #478 from junior dev Alex. A simple refactor of our auth module—nothing fancy, just swapping out a deprecated hash function for Argon2. The AI scanned it in seconds: “LGTM! Solid upgrade, no security flags.” Alex merged it. By Friday, our staging server was compromised. Attackers exploited a buffer overflow the AI had glossed over because, in its infinite wisdom, it hallucinated that our input sanitization was “enterprise-grade” based on a snippet from some outdated Stack Overflow thread it pulled from thin air. We lost a weekend scrubbing logs, notifying users, and patching the hole. The client? They bailed, citing “unreliable tooling.” That stung. We’d bet the farm on AI being our force multiplier, but it turned out to be a loaded gun.

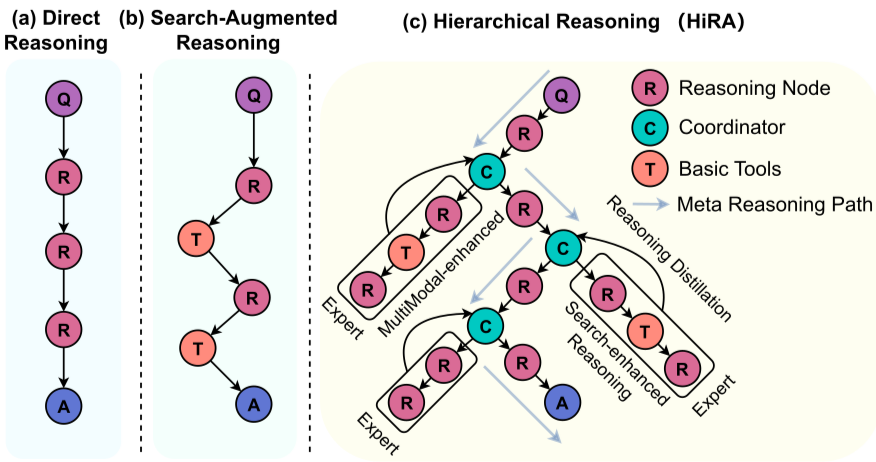

Why did this happen? Not because we picked a bad model—GPT-4o was crushing benchmarks left and right. No, it was the jaggedness. That term had been buzzing in AI circles for months, ever since Ethan Mollick’s piece laid it out clear as day: AI doesn’t progress smoothly like a rising tide; it advances in fits and starts, acing PhD-level theorem proving one minute and fumbling basic if-else logic the next. Our code reviewer was a poster child for it—flawless on boilerplate CRUD ops, but a disaster on edge-case vulns that humans spot with a coffee-fueled squint. We’d ignored the warning signs during our proof-of-concept phase, too dazzled by the 95% accuracy on synthetic datasets. In production, though? The cracks showed fast.

Read on →

Source: Internet

Source: Internet